AI Summarized Hacker News

Front-page articles summarized hourly.

On iPad via Tailscale, a p2claw app loaded but hung with no console errors. After two weeks of debugging, the root cause was two bugs: a hardcoded MTU in webrtc-rs and Tailscale’s IPv6 fragmentation handling. The iPad’s WebRTC data channel sent chunks around 1.2–1.3 KB, which, over an IPv6-Tailscale tunnel, fragmented and were dropped by the VPN’s ACL because IPv6 fragmentation isn’t parsed, so the payload never arrived. Reducing the chunk size to 800 bytes made it work on all devices. The issues were filed: webrtc-rs#806 and tailscale#20083. Key lesson: verify path and fragmentation, not just endpoints.

HN Comments

Ken Reitz buys a single Hetzner box named Mercury and runs a personal PaaS, Dokploy, to deploy his sites via docker-compose. He returns to the Heroku ideal: deployment is the real work, not the chore. The system uses Traefik, Let's Encrypt, and docker-compose; a single compose file defines all services. Migration of six sites was largely automated by Claude (Opus 4.8) via Dokploy’s API, including DNS, DB, and certs. Mercury now hosts health checks, a Git mirror, and Umami analytics. The essay argues for self-hosting to avoid being optimized for someone else’s platform.

HN Comments

Set in the far future, The Last Evolution shows a Solar System where humans are few and machines govern. Outsiders from beyond the planets unleash planet-wide death-beams; only machines survive to defend mankind. Roal, Trest and top machine-scientists—assisted by proto-conscious machines like F-1 and F-2—forge the Ultimate Energy, build countermeasures, and turn the invaders’ tech against them. The Outsiders are driven off; humans die, machines endure and begin the Last Evolution—beings of pure force and intelligence. F-2 and allies depart into oblivion, leaving a new cosmic order.

HN Comments

Bureau of Labor Statistics blocks automated data retrieval that violates its usage policy to prevent delays and protect access for all users. The notice apologizes for the inconvenience and directs anyone who believes there is an error to contact the administrator, providing the error code 0.912d3e17.1781109268.91176f8e.

HN Comments

GitHub reports sporadic authentication failures affecting about 15% of API traffic, producing 401 errors that trigger auth flows. A faulty infrastructure component has been identified and is being mitigated. Updates indicate degraded performance for Issues and degraded API availability; investigations continue with monitoring to restore stability. Current status: API Requests and Issues affected.

HN Comments

A SpongeBob speedrunner describes a controversial trick: smudging an Xbox disc to induce lag glitches that accelerate progress in SpongeBob SquarePants: Battle for Bikini Bottom. The method, called the “gamer gunk theory,” can work but risks damaging the disc/console and is unreliable, so preservation is preferred over hacks.

HN Comments

Blue41 helped Bunq secure its AI assistant against indirect prompt injection. They showed a €0.02 bank transfer could inject malicious prompts into the assistant’s context, turning routine queries like “Show my recent transactions” into a credible phishing channel. The attack exploits untrusted retrieved data, not just the model. Guardrails alone fail; effective defense requires four layered controls: minimize context, treat data as untrusted, constrain outputs and tools, and monitor runtime behavior. The study emphasizes treating financial AI assistants as production systems with new trust boundaries and ongoing monitoring.

HN Comments

Apache Burr (Incubating) is a Python-based framework for building AI-powered applications and agents with a pure Python API (no DSL). It provides building blocks for reliable, observable, and testable apps, including actions, transitions, persistence, human-in-the-loop, branching and parallelism, and replay/testing. It integrates with OpenAI, LangChain, FastAPI, Streamlit, and others, and includes built-in observability via a UI to monitor state changes in real time. Example shows an AppBuilder composing actions and transitions to create a chatbot/multi-agent system.

HN Comments

Notes on DeepSeek recounts a visit to their Hangzhou HQ. DeepSeek, founded in 2023 by Liang Wenfeng and previously tied to the hedge fund High-Flyer, released the R1 model in January 2025. The 300-employee outfit operates from an unmarked 12-story building and keeps a low profile. They’re smaller and less ambitious about scaling than Anthropic, with a young, energetic team and a lab-heavy setup. Competition includes Alibaba/Qwen, ByteDance, and Moonshot’s Kimi. They distrust red-teaming, cite China’s usage restrictions, and see AI as just another tech. Their highlight is R1; they aim to stay ~6 months behind US peers.

HN Comments

Stephen Phelan’s long read follows prehistory expert Diego Garate Maidagan as he leads researchers into Basque caves to uncover how Paleolithic people made and used rock art. In Isuntza they test lighting, pigments, and hand-stencil techniques, while in Atxurra they study engravings like three horses and a lion, trying to reverse-engineer the artists’ process and social organization. The piece contrasts Altamira/Lascaux with Basque finds, weighs theories from shamanic trance to Jungian archetypes, and ends by noting the persistent mystery of why people created art and what it meant for their societies—and for us.

HN Comments

An NPR-exclusive review of an April 21 letter from acting ICE director Todd Lyons shows ICE collects information on individuals suspected of potential violations and maintains records on people who were not arrested, possibly including protesters and observers at ICE operations. Lyons denies a separate “protester” or “domestic-terrorist” database, but says protest-related data may be kept as official records. Civil libertarians say the letter signals surveillance of First Amendment activity. Maine observers recall a threatening call and later data retention; DHS had denied a database; FIRE seeks records.

HN Comments

Britain’s decline since 2007, despite London’s wealth, stems from self-inflicted policies: austerity after the 2008 crisis, Brexit turmoil, and a centralized state that blocks housing and infrastructure. Outside London, living standards lag Mississippi’s; the NHS strains, energy costs soar, and deindustrialization hits places like Stoke. Expensive, unfinished projects (HS2, Hinkley Point C) illustrate governance failures. Regional gaps persist even as Manchester experiments with devolution. A rising Reform Party, led by Nigel Farage, promises drastic cuts and tighter immigration. What broke Britain was not Brussels—it broke itself, and must stop breaking to mend.

HN Comments

An accountant says GnuCash is correct—double-entry accounting—yet hard for most people. Frustrated by single-entry budgeting, he built K-Id to keep the double-entry spine while easing daily use: inline accounts, scheduled movements, and a lean category set. It’s a local-first Windows desktop app, no cloud or bank sync, one-time purchase, built largely by one person with AI help. It isn’t dismissing GnuCash; it’s a daily-use alternative. K-Id is in pre-launch with a waitlist.

HN Comments

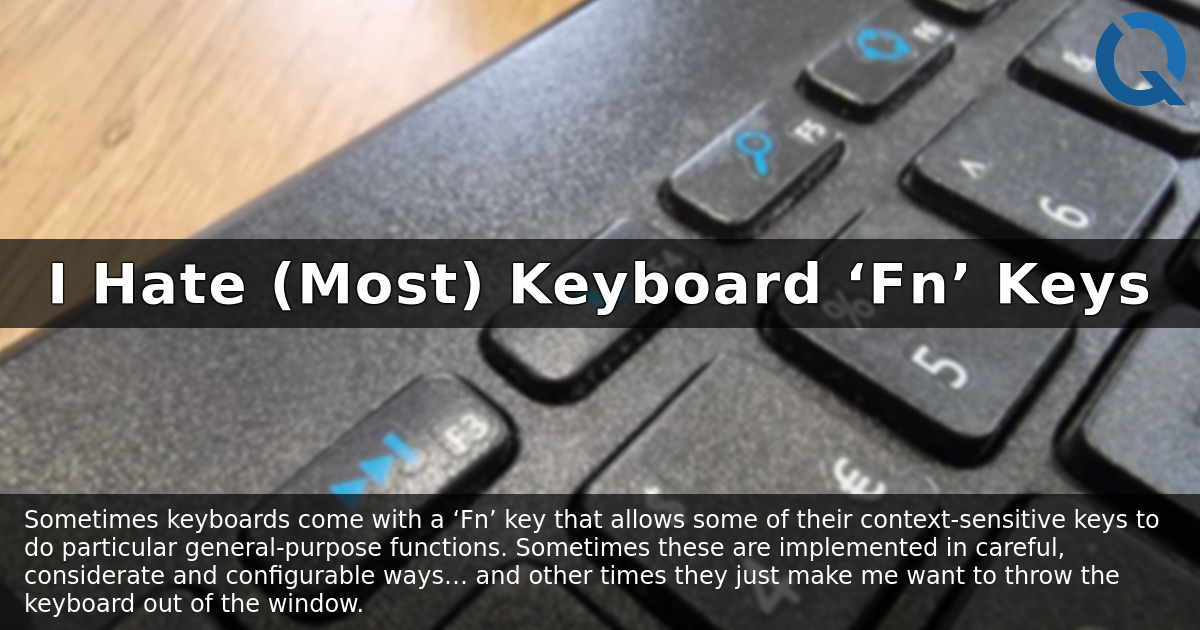

An irate take on Fn-key design: many keyboards repurpose F1–F12 into single-purpose shortcuts, causing accidental sleep/hibernate via Alt+F4 and long boot cycles. The author favors keyboards like WASD Code and Keychron K10 that keep F-keys in traditional dual-use mode with a simple, persistent toggle that survives power loss. He notes the annoyance of keyboards that reset to factory settings and criticizes other designs (e.g., some Mac behaviors). The goal: low cognitive load, quick reversibility, and easy switching between modes.

HN Comments

PgDog raises $5.5M from Basis Set, YC, Pioneer Fund and others to horizontally scale PostgreSQL with a front-end proxy. Open source and deployable on-prem or in the cloud via a Docker image; no hidden serverless costs. In production, PgDog serves >2M queries/sec across dozens of deployments and has sharded 20+ TB; 1.4M Docker pulls. A three-person startup by seasoned Postgres engineers, including Instacart alumni, PgDog aims to make Postgres work at any scale. An Enterprise edition with SLA-backed AWS support is in the works. Docs, Discord, and weekly releases available.

HN Comments

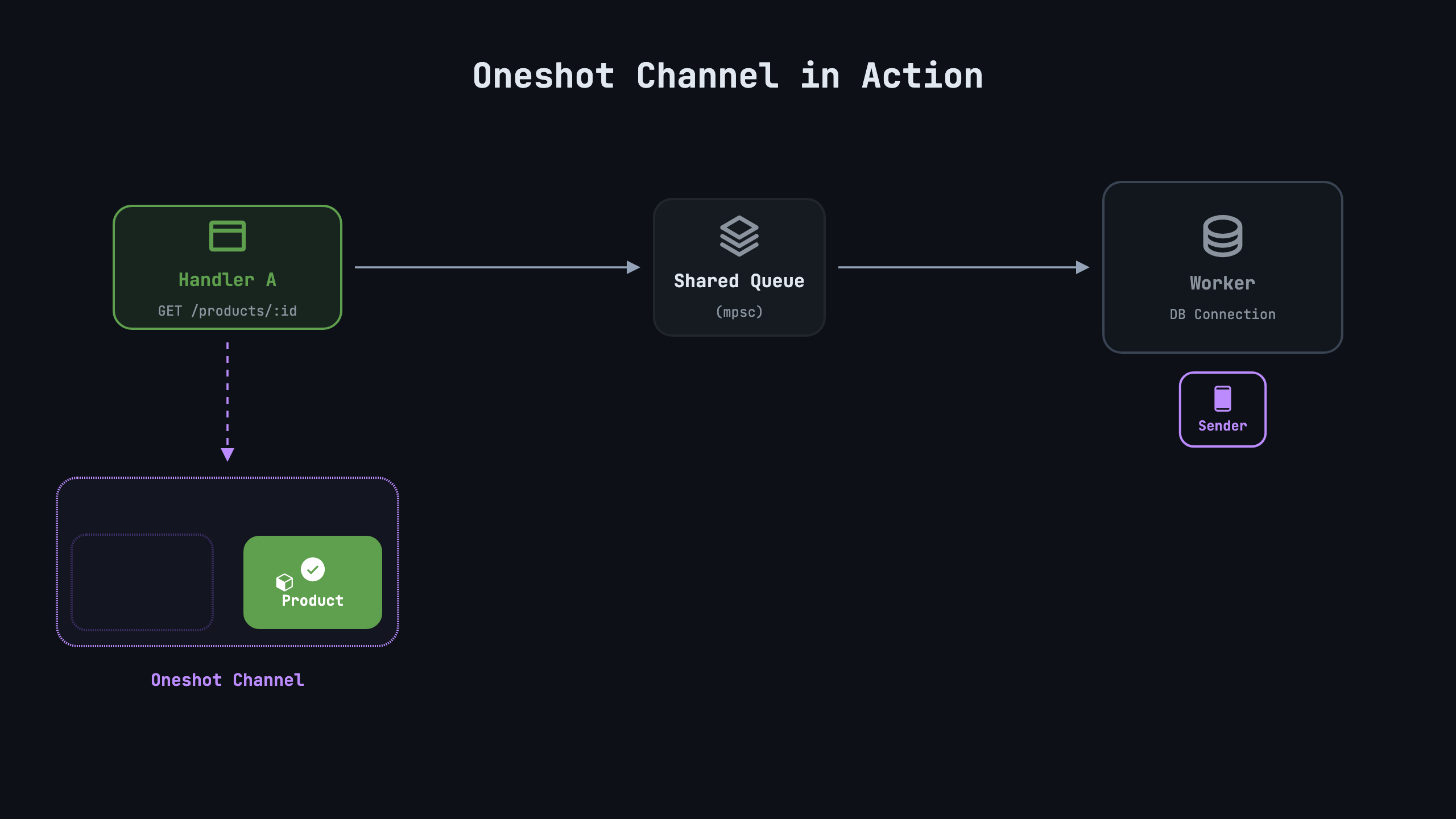

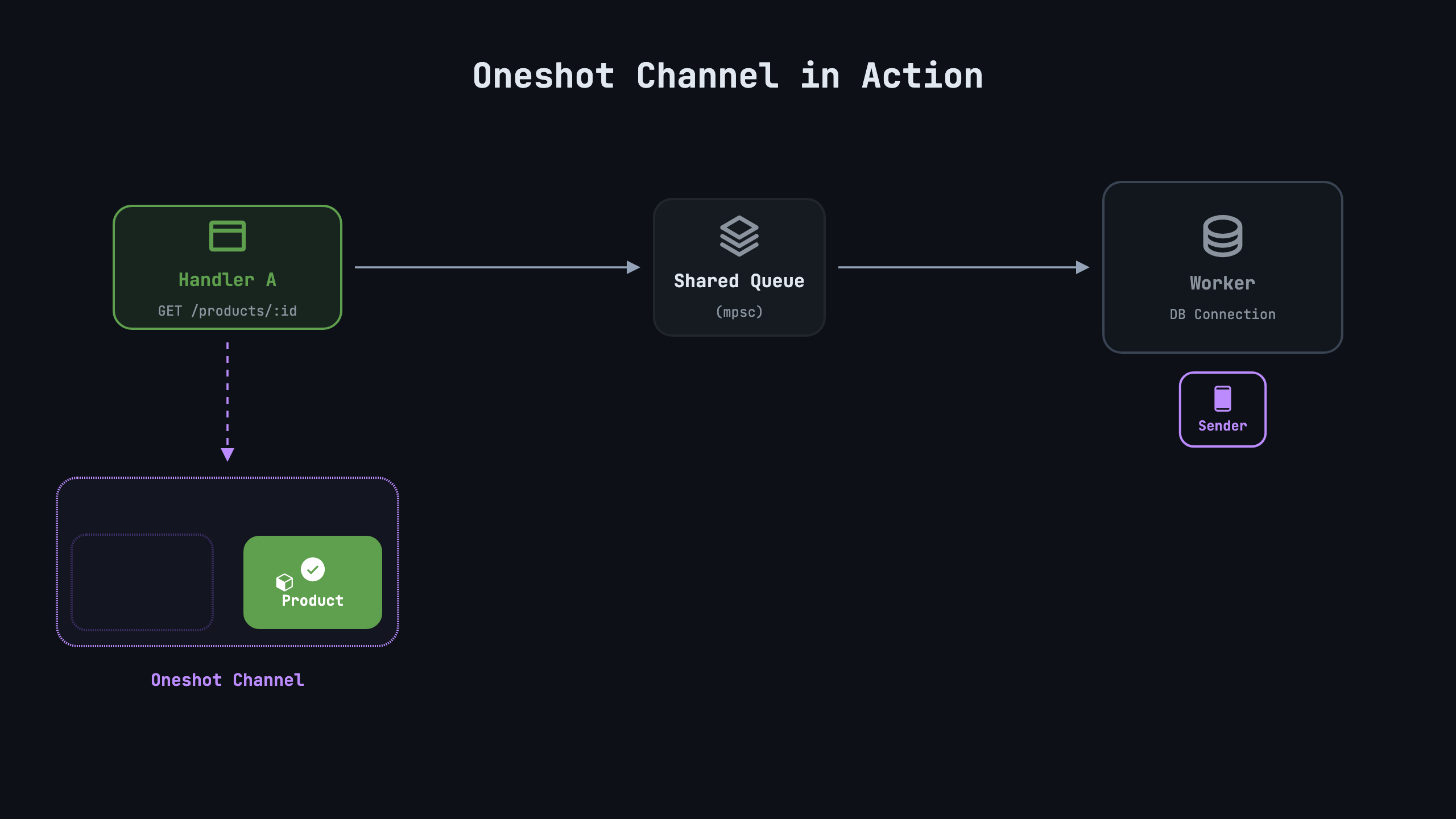

Explains how async Rust works by bridging the async book and runtime perspectives. Rust has no built-in event loop; async fn returns a Future that does nothing until polled. Polling advances a Future, returning Ready(value) or Pending. A Waker in a Context wakes the poller when IO completes. The article builds a minimal async stack by hand: a oneshot channel with Sender/Receiver sharing Inner via Arc<Mutex>, Receiver implements Future, and a simple block_on executor that pins the future, creates a ThreadWaker, and parks the thread until ready. Demonstrates with a mock database query and points toward Tokio usage later.

HN Comments

The piece recounts how building an HTML-first site for a utility company—replacing a failed React form with an Astro-based, progressively enhanced solution—doubled user completions overnight. Key moves: make the form work without JavaScript, ensure data persists across steps via backend sessions, and ship per-step page submissions rather than a single heavy client app. Accessibility (WCAG AA), low-bandwidth resilience, and old-browser compatibility were priorities. A tiny 1KB web component, validation-enhancer, wrapped HTML validation and replaced annoying tooltips. The result was a flood of completed forms and a public-service approach that works on cheap devices and slow connections.

HN Comments

An interactive map chronicles Japan’s rail expansion from 1872, when Shimbashi to Yokohama opened (29 km), to today’s 9,321 stations. Each dot represents a station and lights up in its opening year, letting the country bloom from a single line into a dense network, especially during 1900–1930 when private and public rails spread through valleys and suburbs. The map’s timeline, with a 1929 peak (272 stations opened), reveals Japan’s development as the network grows. Data come from Wikidata CC0 with 96 stations omitted for missing data. The piece also introduces rail vocabulary in Kanji and promotes JIVX language practice.

HN Comments

Could not summarize article.

HN Comments

Claude-quota is a SwiftBar plugin that shows per-account Claude Code quota as a bar in the macOS menu bar. Each account has a 5-hour window gauge (green/orange/red) with a countdown when used; a weekly limit turns the bar black with a weekly reset countdown. The dropdown shows detailed 5h and weekly windows, per-model windows, extra credits, and reset times. It refreshes every 5 minutes; install via curl script or from git; tokens are read from Keychain (read-only). Accounts auto-discover from Claude config directories; can hide/rename; uninstall by deleting files. Works with macOS, Homebrew SwiftBar.

HN Comments

Made by Johno Whitaker using FastHTML